The case for healthy engineering performance management

When capital was cheap and talent was scarce, performance management in software engineering was laser-focused on keeping people. “Below expectations” was a rating you used sparingly, and rarely needed.

That era is over. Post-ZIRP, with AI compressing what headcount companies think they need, “below expectations” is now handed out routinely — partly because it provides useful paperwork when layoffs come, and partly because in a leaner environment, the bar for “meets expectations” really has risen.

What hasn’t changed, however, is what the research says about high-performing teams. Google invested heavily in trying to understand what made them effective and the answer came down to trust, clarity, and whether people felt safe enough to take interpersonal risks. These conditions, the ones that help people do their best work, haven’t changed even as the business context around them has been turned on its head.

This post is mostly about those fundamentals, because they’re still what determine whether a performance process is useful, or punishing, to engineers. Even in organizations where ratings are being used for headcount decisions, there’s usually still room to run the process — the 1:1s, the feedback conversations, the data-gathering, the self-reviews, the calibration — in ways that are honest and fair.

A note on scale

How performance management works, and what it needs to do, changes a lot depending on what scale your organization is at:

- Fewer than 50 engineers: Things are mostly informal, and that’s fine. Managers have direct visibility into the work, feedback happens in the moment, and expectations get shaped through daily collaboration rather than documentation. At this stage, you’re probably not writing leveling rubrics yet.

- 50–200 engineers: This is where formal systems start appearing: frameworks, career ladders, structured review cycles. Which makes sense, because you can no longer rely on everyone knowing what good looks like. The distance between leadership and the people doing the work starts to grow, and that changes what performance management needs to do.

- 500+ engineers: Now we’re in the territory of calibration sessions, rating distributions, managers presenting their engineers to a room of senior leaders who’ve never worked with them.

Organizational growth tends to erode the proximity that good performance management depends on. Managers get further from the day-to-day; context gets filtered through summaries and calibration sessions.

More process doesn’t exactly fix that. What does fix it: keeping managers close enough to the work to form their own view of it, building structured ways for engineers to surface their own contributions rather than relying on what their manager happened to notice, and using data to recover some of the proximity that growth takes away.

Teams first, individuals second

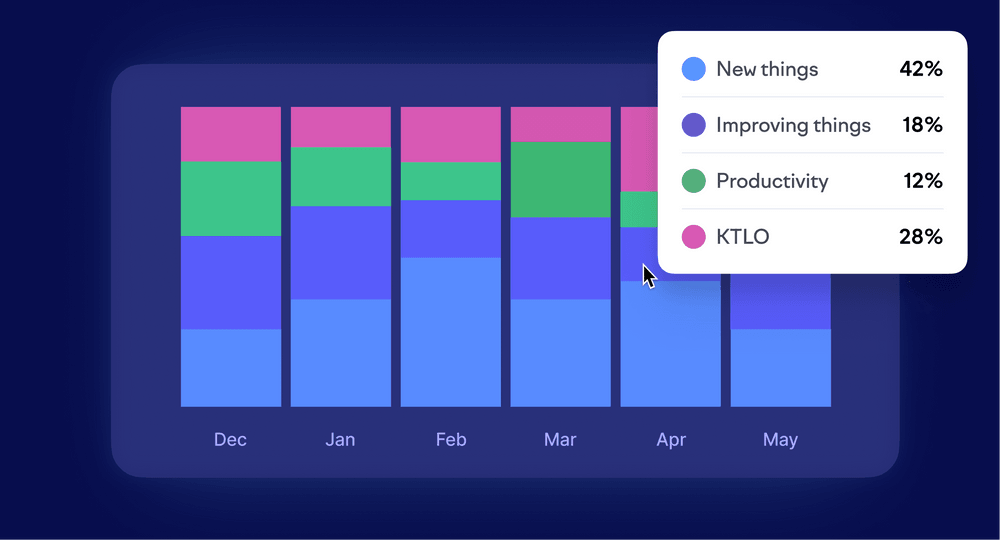

Performance management defaults to the individual as its unit of analysis. But most outcomes in a software organization are produced by teams.

Before evaluating individuals, it helps to ask whether the team is set up to succeed — whether priorities are clear, whether the investment balance makes sense, whether structural constraints are creating the problems that get attributed to people. Individual performance exists within that context, not separate from it.

When you skip that step, you end up with performance conversations that assign individual blame for what are really team-level or organizational problems. An engineer’s cycle time doesn’t mean much if the team’s review process is broken. Someone’s output looks different when they’re carrying half the on-call rotation.

Necessary conditions for honest conversations

Before getting into frameworks and cadence, remember that the person sitting across from you (in a 1:1, in a review conversation, in a conversation about an improvement plan) has a whole life happening outside of work. None of that makes performance conversations less necessary, but how you have them matters more than most managers think.

Building psychological safety means people feel they can take interpersonal risks: ask for help, admit mistakes, disagree with a call, surface a problem they’ve spotted. Teams with high psychological safety tend to argue more than those without it, not less — the difference is that the arguments are about ideas and approaches rather than about whether it’s safe to have them. A concern that comes up often is that focusing on this means letting things slide. It doesn’t. Safety is what makes candor (radical or otherwise) possible.

You can’t have an effective performance conversation with someone who doesn’t feel safe. They’ll show up, but they won’t hear the feedback the way you intend it. Without safety as a foundation, feedback lands as a threat, growth conversations trigger anxiety, and anything framed as performance-related gets filtered through the assumption that something bad must be on its way.

A few things can help these conversations go well:

- Establishing shared facts before evaluation. Jumping straight to judgment before both people have a shared picture of what happened is a common failure. Starting with the work, the context, the timeline, and the decisions that were made gives both people something concrete to react to. It’s much harder to feel blindsided by a conclusion when you both walked through the evidence that led to it.

- Separating the diagnosis from the prescription. Skill gap, motivation problem, or fit issue — they can look similar from the outside and they call for completely different responses. The diagnosis has to come first, and it comes from understanding the work, not looking at metrics alone.

- Naming problems early. When something isn’t working, say so clearly and early, with enough time for the engineer to do something about it. Managing someone through months of hedged, vague feedback before reaching a conclusion they could have responded to earlier delays the inevitable, at the engineer’s expense.

What to evaluate in performance reviews

Most managers default to what’s measurable in. But while metrics feel concrete, concreteness isn’t the same as accuracy, and most of the metrics that are easy to pull don’t hold up particularly well as a read on individual engineer performance.

I asked Otto Hilska, our CEO, how he thinks about evaluating an engineer’s performance. He’s spent decades building engineering organizations. Four questions came straight off the top of his head:

- What’s the impact of the work? Not how much shipped, but whether what shipped moved a business metric, addressed a concrete problem, or improved something meaningful for the team or for users. Impact looks different for a junior engineer two months in and a staff engineer three years in. Figuring out what impact should look like at a given level is part of the manager’s job.

- How’s the craft? Code quality, thoughtfulness about design, the care someone brings to a code review — and as much about trajectory as current state. Are they getting better? Do they understand why certain approaches hold up better than others? Craft is harder to evaluate from a distance, which is one reason good performance management requires managers who are close to the work.

- What level of challenge can you hand them? Not whether they can complete tasks, but how independently, and how hard can the problem be? This tends to be the clearest signal of where someone is in their development, and it’s what engineers themselves care most about: the sense that they’re being trusted with problems that matter.

- Are they making the team better? Documentation, proactive communication, code reviews that help people grow, being someone their teammates want to work with. This dimension often gets shortchanged, partly because it’s the hardest to evaluate unless you’re close to the work, and partly because some strong individual contributors aren’t particularly good at it, and it’s easier to ignore than to address directly.

These four dimensions should give you a consistent lens to apply across different engineers and different review cycles without reducing anyone’s work to a single number.

Rewarding heroics over foundations

Often, engineering performance management ends up measuring what’s visible rather than what’s important.

Think about what gets recognized in many engineering organizations: the engineer who swooped in over the weekend to fix the production incident, the one who shipped the big feature two weeks early, or the one who worked overtime to get the launch out on time.

Yes, these deserve recognition, but a performance management system that rewards heroics without asking why the incident happened is basically accepting the conditions that required them. Engineers figure this out fast. If fighting fires earns praise and preventing them earns nothing, that’s a signal about what the organization values. The people who burn out first are usually the ones who care too much to let things break.

The same challenge appears in what Tanya Reilly calls “glue work”: code reviews that teach people something, documentation that new hires can use, maintaining the CI pipeline, mentoring, keeping technical debt from compounding. None of it ships on a public roadmap, and on a performance system calibrated around feature delivery, it tends to be invisible — right up until someone leaves and, surprise, no one knows how the deployment pipeline works.

Over time, people notice what gets rewarded and act accordingly. If your process consistently fails to see the work that holds teams together, you’ll end up with engineers competing to ship the next big thing while the important foundation work stops happening.

Data as a conversation-starter, not a conclusion

Engineering data earns its place in a performance conversation when it helps you understand what’s been happening. It doesn’t do much when it tries to replace that understanding.

If a team’s cycle time has been creeping up over a couple of months, that’s something to dig into. Maybe the work is more complex than usual. Maybe reviews are backing up. Maybe someone’s overloaded and hasn’t said anything. You don’t know until you look.

“Your cycle time is in the bottom quartile of the team” skips that step completely. It turns something that needs context into a conclusion, and puts a team-level metric onto one person. Cycle time, shaped as much by waiting as by doing, doesn’t say much about individual performance on its own.

Data is much more useful when it helps reconstruct the work: what someone spent their time on, how that connects to team priorities, where things slowed down, who they were collaborating with. That context is harder to maintain as teams grow, which is where engineering intelligence tools can help.

A caveat, though: tools in this space vary considerably. Some reduce an engineer’s work to a productivity score (commits, PRs, lines changed, blended into a composite) and present them in a table that “definitely isn’t stack ranking, we promise”.

These normalized scores are attractive if you want to get performance management done quickly. But they tend to reward the wrong things: they ignore complex work, miss the maintenance and collaboration that holds teams together, and create a false impression of rigor.

All that to say: if you’re pulling data into a performance conversation — from whatever tool — that data should support the conversation, not be the conversation.

Engineering performance management cadence

If regular conversations are happening throughout the year, a formal review becomes a synthesis of things already discussed, not a standalone verdict. When managers save their observations for review cycles, they turn reviews into high-stakes evaluation events that produce defensiveness rather than growth. So try your best to not do that.

Engineers who do strong work but aren’t natural self-advocates are disproportionately harmed by informal, vibes-based processes. When there’s no consistent structure, the engineers who get seen tend to be the ones who are good at being seen, which isn’t always the same as being good at their job.

Cadence is more important than any individual component of the process. The more frequently performance conversations happen, the less weight the formal review has to carry. A rough structure that tends to work regardless of scale:

- Weekly or fortnightly 1:1s are for catching little things before they become bigger problems. Blockers, mood, what’s on someone’s mind. These don’t need an agenda, they just need to actually happen. If someone’s struggling or frustrated, this is where you find out early.

- Monthly conversations are slightly longer and more forward-looking. Less about what’s happening this week, more about whether the person is heading in the right direction. Are they working on the right things? Is there anything getting in their way that isn’t going to surface in a 1:1?

- Quarterly check-ins are more deliberate. You’re looking at the period together: what went well, what didn’t, what the focus should be for the next quarter. This is also where you check in on longer-term development — are people growing in the ways they want to be growing?

- Formal reviews should simply be a synthesis of what’s already been discussed. If the previous three are happening consistently, this one shouldn’t surface anything new. 1-2 times a year is a healthy cadence for a formal review.

A good litmus test to know if your process is working: the engineers on your teams know where they stand at any given point in the year.

Doing this as best you can

In some organizations, performance management has become less about development and more about documentation — building the paper trail for headcount decisions that were going to happen anyway. Ratings carry more weight, and the metrics behind them aren’t always chosen for the right reasons.

That part isn’t always something an individual engineering manager can change. What you do still have control over is how the process shows up in practice. People shouldn’t be trying to piece together where they stand from a formal review. Feedback needs to come early enough that there’s still time to respond to it — and that only works if you understand the work in enough detail that you’re not just going off whatever was most visible or most recent.

If all of those pieces are in place, your process should hold up pretty well against the ever-changing-something-landscape we’re all here living in.

Subscribe to our newsletter

Get the latest product updates and #goodreads delivered to your inbox once a month.