- Product

- Changelog

- Pricing

- Customers

- LearnBlogInsights for software leaders, managers, and engineersHelp center↗Find the answers you need to make the most out of SwarmiaPodcastCatch interviews with software leaders on Engineering UnblockedBenchmarksFind the biggest opportunities for improvementBook: BuildRead for free, or buy a physical copy or the Kindle version

- About us

- Careers

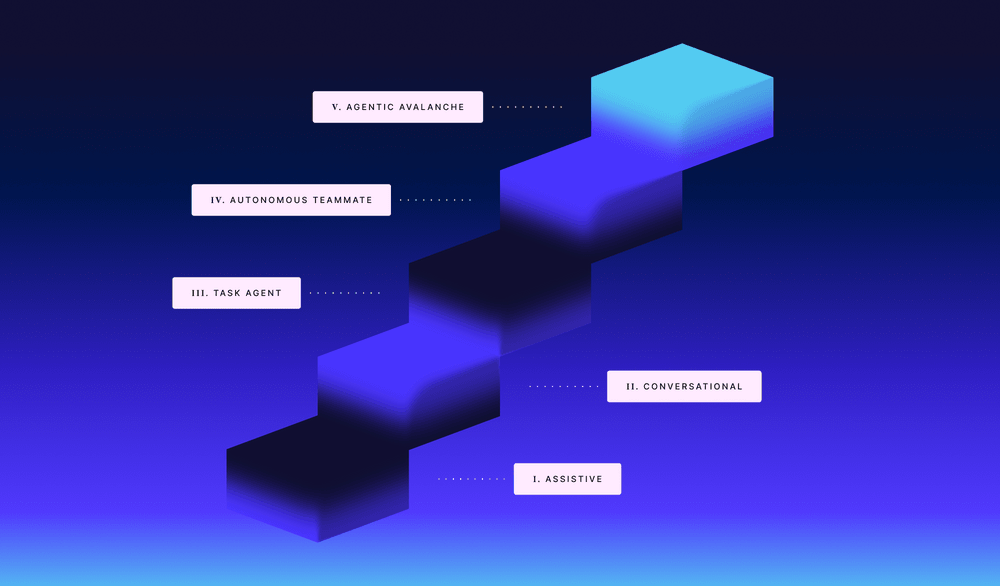

Five levels of AI coding agent autonomy, and why higher isn’t always better

“We’re using AI for coding” can mean a lot of things. Autocomplete in your IDE, a chat interface that knows your codebase, or an agent opening pull requests while you’re on the bus.

The tools are different — different tradeoffs, different failure modes, different things to measure. But many teams treat them as interchangeable, reaching for whichever one is open rather than asking which one fits the task.

The taxonomy in this post has five levels, defined by a single variable: how much of the work does the agent do autonomously before returning to you for feedback? For each level, the goal is the same: understand what it’s actually good for, and when to reach for something else.

It’s not a ranking, and higher is not always better. Think of it like a staffing decision: making an industry expert work as an intern is wasted potential; putting a middle-schooler in the CEO chair is a disaster.

Level 1: Assistive

The first level of autonomy is not really autonomy at all.

Inline suggestions, refactors, and quick fixes in a single file. The agent sees what you’re working on right now and nothing more. And it certainly doesn’t remember anything after you close the window. The core characteristic of this level is manual context management. There’s also a practical reason autocomplete works this way: it needs to respond at every keystroke with sub-second latency, which limits how much context it can realistically use.

Another form of Level 1 is copy-pasting code output from an AI tool into your terminal. And while talking with ChatGPT is conversational in nature, you’re still manually doing the context management, so it still lives here in Level 1, since you’re choosing what to hand the model every time.

This kind of AI use is mostly an ergonomic improvement, not a productivity transformation. And by design, Level 1 doesn’t scale to multi-file, multi-step problems.

Use Level 1 when: the task is contained to a single file, you want to stay in full control of every change, or you’re getting oriented in an unfamiliar codebase. The setup friction is effectively zero.

Not when: you want to go faster with AI. Level 1 is of little use if the problem spans multiple files or requires the AI to understand broader context. It won’t, because it can’t.

Since Level 1 lives or dies on whether engineers actually use the tool, these are the basic metrics you can keep track of:

- Adoption rate: what share of your engineers have a license and are using it actively?

- Acceptance rate: what share of suggestions do developers accept?

- Opt-out rate: who disabled it, and why?

Level 2: Conversational

The second level is like pair programming with someone who’s read the documentation you’ve given them, but nothing more. The agent doesn’t actually know your codebase; it loads what it can into its context window, which means your results are only as good as your AGENTS.md and how well you can point it at the right place to start.

It’s chat, usually, but it navigates your repository, can run tools on request, and writes code across multiple files while you steer it throughout.

In practice, this looks like asking the agent to plan an approach, reviewing the plan, then proceeding — accepting or rejecting multi-file edits one by one, running tests through the agent and incorporating the results, breaking a complex problem into subproblems and working through them with frequent checkpoints, writing the gnarliest parts yourself while letting the agent handle the scaffolding.

The keyword here is interactive: the agent moves fast, but you always decide where it goes.

The difference from Level 1 is intent. With autocomplete or single-file edits, the agent follows from the sidelines, trying to be helpful without really knowing what you’re trying to accomplish. In conversational mode, you tell it what you want — the goal, the constraints, and the relevant context. This explicit framing is what makes multi-file, multi-step work manageable.

This is still the sweet spot for many developers, especially in larger organizations where the conditions for agentic engineering aren’t there yet. Anthropic’s own engineers and researchers report integrating AI into 60% of their work, but even they continue to maintain active human oversight on 80–100% of tasks. That second number tells you where the center of gravity still is: collaborative, interactive, human-in-the-loop. So, Level 2.

Use Level 2 when: the task requires judgment, spans multiple files, or is ambiguous enough that you need to steer. Think complex features, architectural decisions, anything where “done” is hard to specify upfront.

Not when: the task is so well-defined that you don’t need to be present — that’s what Level 3 is for. Staying at Level 2 for well-scoped tasks just means you’re spending attention you don’t need to spend.

The interesting question at Level 2 is whether engineers are using it purposefully, or just here and there because you’ve asked them to. A few things can help you tell the difference:

- Swarmia’s AI activity views show how developers are using different AI coding modes, including conversational vs. agent-based.

- Developer satisfaction with AI tools through developer experience surveys can help bring some context to the metrics.

- Which AI tools are being used on which parts of your codebase?

Level 3: Task agent

The third level is where things start to get interesting: you hand off a task and come back to a pull request. This is the level where “agentic engineering” actually starts.

The agent plans, edits code, runs tests, opens a PR, and responds to review without you sitting there watching.

My rule of thumb for whether something qualifies as a task agent: can you create a pull request from your phone without opening your laptop? If yes, it’s Level 3.

That might look like tagging @Cursor in a Slack thread and seeing a PR appear before your next meeting. Or assigning a GitHub issue to Copilot coding agent. Or triggering Claude Code GitHub Actions by mentioning @claude in a PR comment. In each case, the agent clones your repo, works in an isolated cloud environment, runs your CI, opens a draft PR, and waits for review.

The Level 3 category matured significantly through 2025–2026, and it will continue to do so. GitHub’s Copilot coding agent launched in May 2025, Cursor background agents followed, with Slack integration in June and long-running agents added in February 2026. GitHub Agent HQ, launched in February 2026, lets you assign issues to Copilot, Claude Code, or OpenAI Codex agents from within GitHub.

The asynchronous nature is what makes this more than the ergonomic improvements that came before. You fire off a task and forget it, so the barrier to getting something done drops dramatically.

Level 3 agents also leave a paper trail. Every branch, commit, and PR is recorded in version control, often linked back to the original issue. With Level 2, unless the engineer manually commits intermediate work, a lot of the AI’s involvement goes undocumented. That traceability makes it easier to bring AI into team workflows more naturally — others can see what the agent did, review it, and even trigger the same process themselves.

As Addy Osmani has framed it, this is the shift from engineers as “conductors” (directing AI in real time) to engineers as “orchestrators” (setting objectives and reviewing outcomes).

But Level 3 only works well if your engineering pipeline is in good shape. This connects to a core finding from the 2025 DORA Report: AI is an amplifier, not a fix. Organizations with strong engineering practices see benefits; those without see their existing bottlenecks made more visible. Specifically, the DORA report found that high AI adoption correlates with larger PRs and longer code review times.

So if your review process is already a bottleneck, Level 3 agents will probably make that worse before they can help make it better.

At this point, agents will still confidently produce the wrong thing on projects that are complex, ambiguous, or that need a high level of context. That said, there is still value in firing one off before everything is perfectly scoped — if it one-shots a solution, great; if it closes with nothing merged, you probably learned something about the problem anyway.

Use Level 3 when: you can describe “done” in a sentence or two with no ambiguity, and you trust CI (or human review) to catch mistakes. A bug report with a clear repro, a dependency update, a feature with a complete spec. These are also exactly the tasks that tend to slip down the backlog because the overhead of triaging and assigning them outweighs the actual work — which is where Level 3 pays off most.

Not when: the task is ambiguous, requires deep context, or your pipeline isn’t solid. Agents are optimistic: they’ll produce something even when they shouldn’t, and a broken CI loop means nothing catches it — at least not until a human has to spend more effort fixing the agent’s mess.

Our team recently released an agent-specific view in Swarmia, so you can keep an eye on how these things are trending:

- Merge rate: what share of agent PRs get merged vs. closed? And more specifically, what kind of tasks were merged, and which weren’t?

- Share of agent PRs: what percentage of all PRs are created entirely by agents?

- Batch size: in general, the higher the batch size, the higher the complexity of the tasks your agents are capable of achieving autonomously. Yet, keep in mind that smaller and more focused PRs are faster to review.

- Review time per agent PR: higher review times can result from big batch sizes or messy code, which may mean you need to assign the agents smaller tasks with tighter scopes.

- Team comparisons: which teams are getting value? Which aren’t, and why?

- Trends over time: is agent PR volume growing? What’s driving it?

Level 4: Autonomous teammate

The fourth level is where the agent stops waiting for you to assign it work.

The agent has a backlog or objective. It picks work items on its own, continuously, without a human initiating each task.

Most engineering teams already operate with one example of this pattern: Dependabot. It monitors your dependencies, notices when something is outdated or vulnerable, and opens a PR automatically, without anyone assigning it or scheduling it. The difference between that and an LLM-powered Level 4 agent is that Dependabot operates on rule-based logic, while an LLM agent operates on judgment (which is both the opportunity and the risk).

The most credible early examples are narrowly scoped maintenance objectives. Specific, scheduled, with clear success conditions:

- Uber deployed FlakyGuard as a fully autonomous system that processes flaky test reports daily and delivers fixes without anyone assigning the work. Out of 1,115 flaky tests over six months, it reproduced 798, created fixes for 380, and successfully landed 197 of those with developer approvals.

- GitHub’s Agentic Workflows ships prebuilt scheduled agents: a Daily Test Improver that monitors coverage and adds tests to under-tested areas, and a Daily Documentation Updater that keeps docs aligned with merged PRs.

- Cursor’s Automations lets you configure agents that trigger from events — a new Linear issue, a merged PR, a PagerDuty incident — or run on a schedule.

The entry point is lower than it sounds. You can use a managed tool like the ones above without writing any agent logic yourself. Or you spin up a scheduled Claude Code GitHub Action pointed at a clear objective. Either way, the pattern is the same: define the objective, set the schedule, review the output.

When nobody has to coordinate this task, the overhead (which is often more expensive than the work itself) disappears.

But all of this only works if the review is actually manageable. Autonomous agents can create review burden just as easily as they reduce coordination overhead. Keep the tasks scoped so each PR is easy to verify: small, focused changes, strong CI, clear diffs.

Use Level 4 when: a category of work recurs predictably and nobody’s excited about doing it. The best Level 4 candidates are tasks where the coordination overhead is higher than the work itself, like flaky tests, documentation drift, or dependency updates.

Not when: the tasks vary too much to define a stable objective, or when review burden would outpace the time saved. If each PR requires careful judgment to evaluate, you might have just shuffled the toil to a different place.

To figure out if your Level 4 agents are pulling their weight:

- Autonomous throughput: how many tasks does the agent complete without human initiation?

- Review time per agent PR: is the agent creating more work in review than it saves?

- Task success rate: what share of autonomously initiated tasks result in a merged PR?

Level 5: Agentic avalanche

The fifth level is where most teams aren’t yet, no matter how it may seem on LinkedIn.

It looks like multiple agents working together: orchestrators spawning subagents, agents coordinating across tasks, with minimal human supervision over the whole system.

As far-fetched as it sounds, these orchestrator patterns are being built and deployed in production systems. Take Cursor’s FastRender project — the company spent trillions of tokens (millions of dollars) to generate over 1 million lines of code across 1,000 files and 30,000 commits, using a three-tier hierarchical architecture: Planner agents to decompose goals, Worker agents to execute in isolation, and Judge agents to evaluate quality and decide whether to continue — all running across up to 2,000 parallel agents for nearly a week. Or Steve Yegge’s Wasteland, and its predecessor Gas Town.

The key architectural concept here is the orchestrator: an agent that breaks down a complex goal and delegates to specialized subagents. The orchestrator is where human oversight lives, even if subagents run freely.

This is active experimentation at the frontier. It’s worth understanding, but it’s not yet ready to be a strategy for most teams. Dismissing it as sci-fi is equally wrong, though.

Experiment with Level 5 when: you have a large, parallelizable problem that genuinely requires multiple specialized agents working concurrently — and you’ve already mastered Levels 3 and 4. The complexity overhead is substantial, so the problem needs to justify it.

Not when: your problem is complex and sequential — in which case, a single agent working through it step by step (à la Level 2) will usually do.

If you are experimenting at this level, a few things are worth thinking through:

- Trust boundaries: what decisions can subagents make autonomously, and what requires escalation to the AI or human orchestrator?

- Humans in the loop: even if subagents run freely, someone should still be watching at the orchestrator level.

- Debugging: multi-agent failures are categorically harder to diagnose than single-agent ones, so plan for that before you build.

The avalanche follows the terrain, and for better or worse, your guardrails are the terrain.

Picking the right level

Before you reach for a tool, ask what “done” looks like for this task. If you can describe it in a sentence with no ambiguity, an agent can probably handle it without you watching — that’s Level 3 or higher. If you can’t, you need to be in the loop, which puts you at Level 2. If you’re just looking for in-the-moment help on a file you already have open, Level 1.

Getting from “we’re using AI for coding” to “we’re seeing value from this stuff“ takes more than just using more AI. Most teams hit a ceiling somewhere between Level 2 and Level 3, and the reason is usually not the tool, but how purposefully they’re using it. Asking which level and for which kind of work is a good place to start.

Subscribe to our newsletter

Get the latest product updates and #goodreads delivered to your inbox once a month.

More content from Swarmia