- Product

- Changelog

- Pricing

- Customers

- LearnBlogInsights for software leaders, managers, and engineersHelp & docs↗Find the answers you need to make the most out of SwarmiaPodcastCatch interviews with software leaders on Engineering UnblockedBenchmarksFind the biggest opportunities for improvementBook: BuildRead for free, or buy a physical copy or the Kindle version

- About us

- Careers

How we do product discovery at Swarmia

Product discovery in software engineering is half art, half discipline, and an entirely necessary part of building good products.

When done well, it solves two problems: building the right thing and building the thing right. These are different challenges, and you need to have an answer for both. Building the right thing means truly understanding what your customers need, and building the thing right means building a solution that actually works in practice, in the right increments.

People ask how we approach product discovery at Swarmia, and I figured the best way to share our process is through a real example.

So let’s look at one of our most-loved features, developer experience surveys, and how it came to be — from initial idea to thousands of survey responses in the bank.

Our three pillars had a gap

Pre-surveys Swarmia did a few things really well. For example, we:

- Gave teams visibility into their core engineering metrics.

- Helped encourage healthy development practices through working agreements and Slack notifications.

- Gave leadership trustworthy and useful data about their organization’s engineering investments.

This meant we had strong capabilities in business outcomes and developer productivity — two of our three pillars. But we knew we weren’t truly delivering on developer experience without connecting the human context to engineering metrics.

Developer experience surveys had been on our roadmap to complete this picture — so the question wasn’t whether to build them, but when and how.

The product discovery process

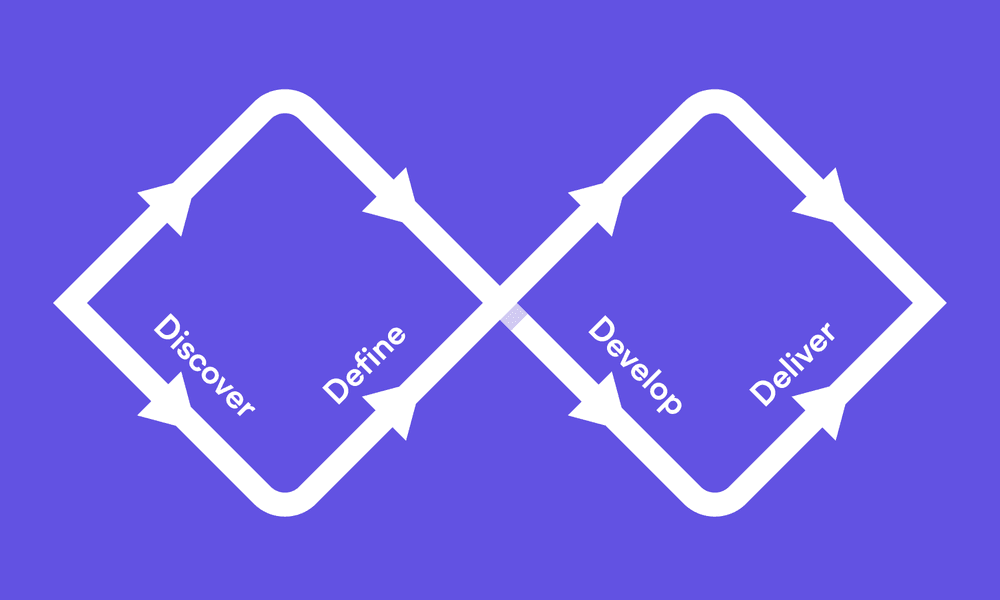

When you’re building something new, you need to cast a wide net first, then narrow down to what actually works. You start by exploring every possible angle (that’s the messy, creative part), then you focus on the solutions that make sense (that’s the practical, building part).

This is the classic double diamond approach. It happens twice — once when you’re figuring out the problem, and again when you’re figuring out the solution. As you learn more about your users, you might need to repeat parts of this cycle — and you can always adjust after you ship something tangible.

Step 1. Discovering the problem

To build a solution, you need a problem. And the problem here was clear from the start: engineering organizations need to understand developer sentiment as well as track engineering metrics, and they couldn’t do both with Swarmia — yet.

And in 2023, two things converged: existing customer feedback reached a critical mass, and we saw more eager prospects listing surveys as a requirement.

The timing was right to move surveys from roadmap to active discovery.

Talk to your customers

At Swarmia, we log every piece of customer feedback in a Notion database. For surveys, we had plenty of feedback about “developer sentiment,” “team health,” and “the why behind the metrics”.

However, while historical customer feedback is an invaluable resource, there’s no substitute for just sitting down and talking to your end users.

We started with 15 discovery calls with customers of all sizes and organizational structures. The calls revealed that most already gathered developer sentiment using Google Forms, HR tools like Culture Amp, and other engineering survey tools. The common issues with these tools were: manual setup and tedious analysis, not tailored for engineering-specific questions, and unnecessary double licensing costs.

Our interviews were semi-structured and open-ended. The more interviews we did, the better questions we were able to ask. We mostly focused on exploring the problem space, although we often reserved time at the end for validating our solutions through Figma designs. We were very intentional about doing this only at the end to avoid anchoring or biasing the interviews.

The calls didn’t only give us a vague idea that “there’s a problem worth solving here”. After the discovery interviews, we knew:

- Why engineering organizations run surveys

- How they run them — from tools to timing to team involvement

- Who owns the process and makes decisions

- How they design questions and process results

- How they handle anonymity and communications

- What “good” looks like in their context

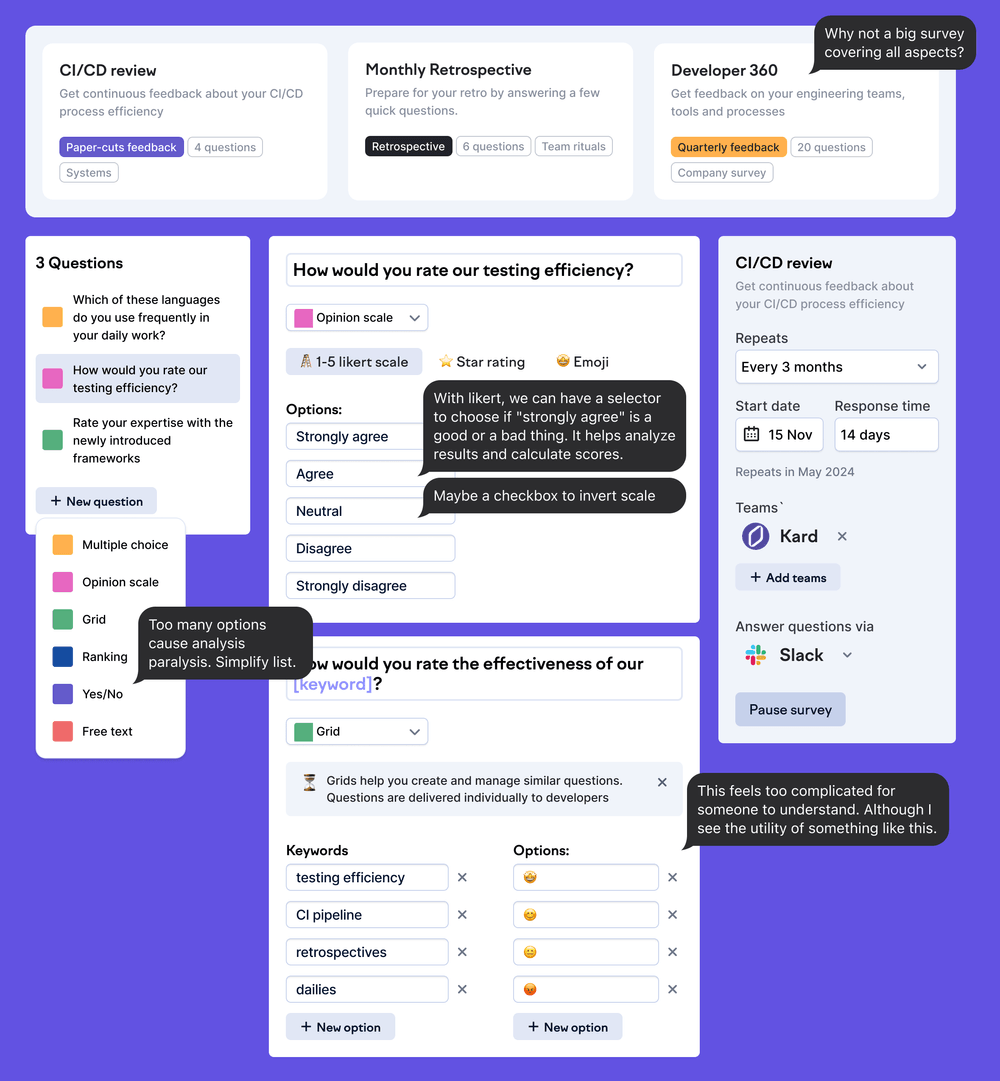

Before the interviews, we were evaluating many different mutually exclusive survey formats: paper cut surveys, trigger-based surveys, pulse surveys, and more. After the interviews, we landed on the quarterly overview survey concept, because that seemed like the most important problem to solve first.

But if we were going to build surveys, we couldn’t just be another form builder.

Step 2: Exploration

Going to the supermarket

I like to think of the early exploration phase as going to the supermarket.

You’re walking down the aisles, picking up anything that looks interesting, not sure what meal you’re going to cook yet. This chaos is intentional and productive — you need to explore broadly before you narrow down.

The goal here isn’t to find the perfect solution immediately. We want to explore the problem space without constraints and gather inspiration from everywhere. For surveys, this meant letting ourselves imagine all the possibilities before reality set in.

Exploring the survey space

No other tool connected survey results with productivity metrics — and that was the missing link, and a unique opportunity to fill this gap.

Surveys have such widely documented best practices and conventions, which is unusual when developing a new product or feature. This meant that we weren’t creating a solution from scratch. Rather, we were creating our version of developer experience surveys.

Because of this, benchmarking was super important. We analyzed over 30 survey tools and competitors — everything from enterprise solutions like Qualtrics to startup tools like Typeform. Each had something to teach us about what worked and what didn’t.

As we explored the problem space, we took notes — both in text and visuals. As a product designer, I enjoy this part of the process the most. We collected ideas like ingredients to use later.

We dug through all customer feedback that mentioned surveys, developer sentiment, or team health. Patterns started emerging with the same requests and frustrations appearing.

Survey design is a science

We realized the hardest part for teams wasn’t running a survey — it was asking the right questions.

We started designing a test survey for our team and immediately realized how hard it is to frame unambiguous, easy to understand questions. This helped us build empathy for customers trying to DIY their surveys.

But we knew we could do better than guesswork. We brought in a team of psychometric experts who had spent years studying how to ask questions without bias.

They provided frameworks for reducing confusion, avoiding leading questions and ensuring statistical validity — so the questions in Swarmia weren’t just random, but truly research-backed.

If you're interested, you can read more about our approach to designing survey questions and take a look at the survey questions themselves.

Step 3: Developing the idea

The next step of the product discovery process is where we transform the beautiful chaos of exploration into something we can actually build.

Generally, this stage involves a trio of key people:

- A product manager, who brings customer context, clear connection to business goals, and keeps us grounded in the real problems

- A designer (that’s me), who visualizes solutions, thinks through user flows from multiple angles, and tries to make complex things simple

- An engineering lead, who provides technical feasibility reality checks, architectural implications, and integration possibilities.

Together, we take all that supermarket shopping and figure out what meal we’re actually going to cook.

Discovery planning meetings

Once the trio has shaped something concrete from all that exploration, we bring it to the whole team. This is where our democratic product planning approach really shines.

We repeat this planning process for as many stories as it takes to get the product to our customers. In the case of surveys V1, that number was around 26 — which meant at least one 45-minute meeting for each story we shipped.

By the end of each 45-minute planning meeting, we have:

- A shared understanding of why we’re building this — and everyone can explain the purpose

- Clear, correctly-sized tasks of 1-2 days each

- Identified risks and dependencies upfront

- Complete team alignment on the approach

This way, we have no waterfall handoffs or engineers “just building what I was told.” We’re all in it together, and everyone owns the outcome.

Step 4: Where theory meets reality

This next part is not strictly part of product discovery, but I think it’s valuable to include in this conversation.

Our MVP philosophy

When it comes to building, we’re ruthless about scope. The key question that drives every decision: “Is this really necessary? Can a customer use the product without it?”

We frame everything as an optimization problem — what’s the fastest path to customer value? Because every feature we add delays learning from real users. And in product development, learning beats guessing every time.

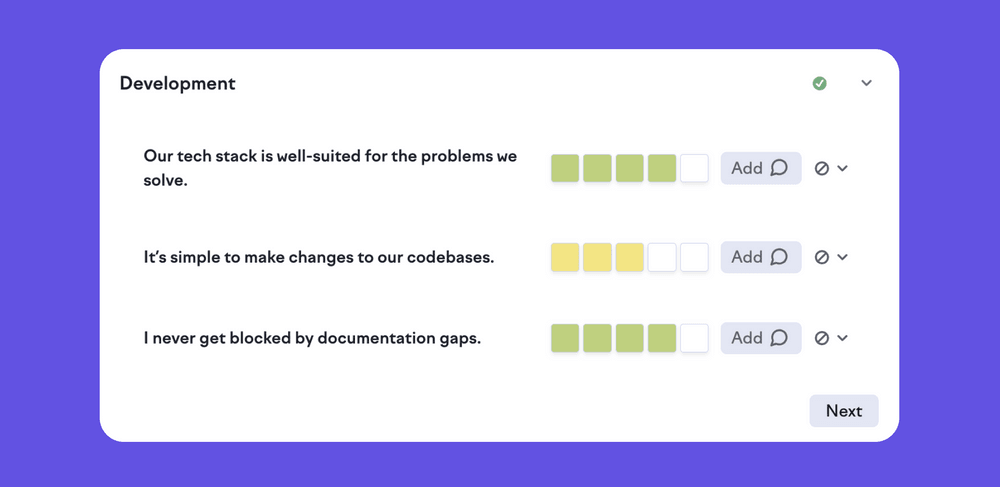

All survey products have at least these three core components: creation, responding, and reporting.

For the surveys MVP, we stripped it down to basically just the second and most crucial part: responding.

For everything else, we used bubble gum and duct tape in our database. Which, compared to what our survey product looks like now, is pretty wild to think about.

What we deliberately left out

We left out features that seemed “important” — reporting UI, automated reminders, multiple question types, industry benchmarking. The reporting UI was especially painful to cut. We had beautiful mockups in Figma showing charts, trend lines, comparison views.

But we asked ourselves: can someone analyze a CSV? Yes. Can someone run a survey without a survey? No.

So CSV exports it was. We focused on getting to market quickly to start learning from real usage, not building what we imagined users might want.

This was beneficial for two reasons: to minimize risk, and maximize value. It allowed us to respond to things we uncovered along the way quickly, without having to abandon things we’d already built.

In the end, what we built was a ~90% match with what we had planned way in advance. That’s a sign that our initial discovery was of high quality, and it helped us a lot. Even when you’re building incrementally, it’s good to have a plan to not paint yourself in the corner.

Being our own customer zero

Being an engineering organization ourselves, we get to build and try all features internally first. This is a great way to smoothen the rough edges.

We ran our first survey internally with Google Forms. As you can imagine, manually reminding 30 engineers to complete a survey really drives home why automated reminders matter. It validated our MVP choices while building empathy for what customers would experience.

This exercise also helped us understand two of the key value propositions of our surveys:

- Creating high-quality survey questions is a real challenge, and providing them to our customers would be a serious value-add

- Being able to not only easily reflect upon survey results, but act on them, is a must

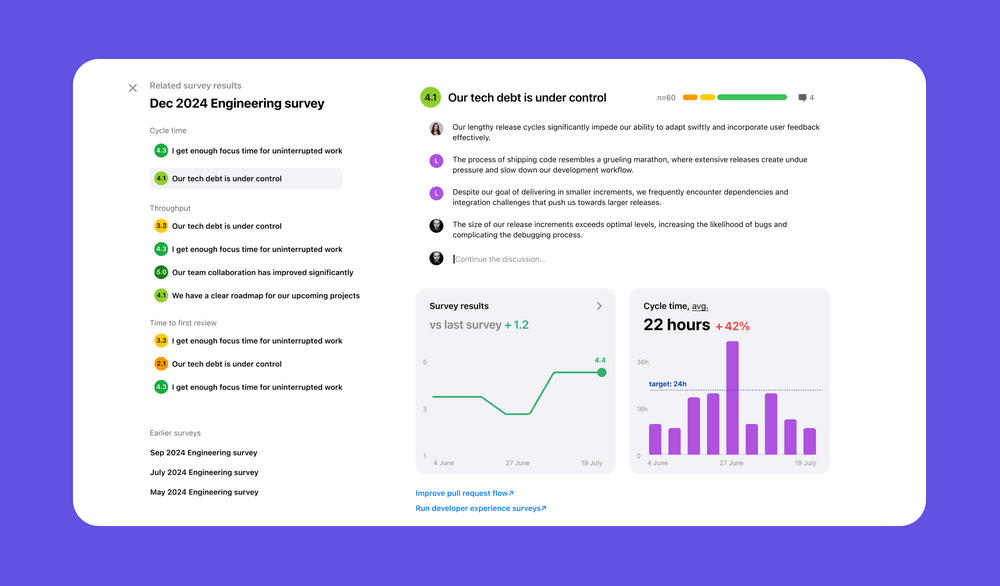

Our first external survey went live in March 2024 with AxiosHQ. Within months, teams were switching from other tools to Swarmia. By January 2025, about 30% of our customers were actively using surveys.

The hypothesis proved correct: teams were finding those “aha” moments, like discovering high cycle time wasn’t due to large PRs but an unreliable test suite making developers hesitant to deploy.

Where we are now

Our surveys product looks a whole lot different than the MVP version we shipped a year and a half ago. You can take a look at the current version on the surveys product page, but I’ll drop a few notable things here too:

- Custom topics and questions

- New question types

- Visual reporting that maps metrics in views across Swarmia to survey results

- AI tool adoption connected to developer sentiment through surveys

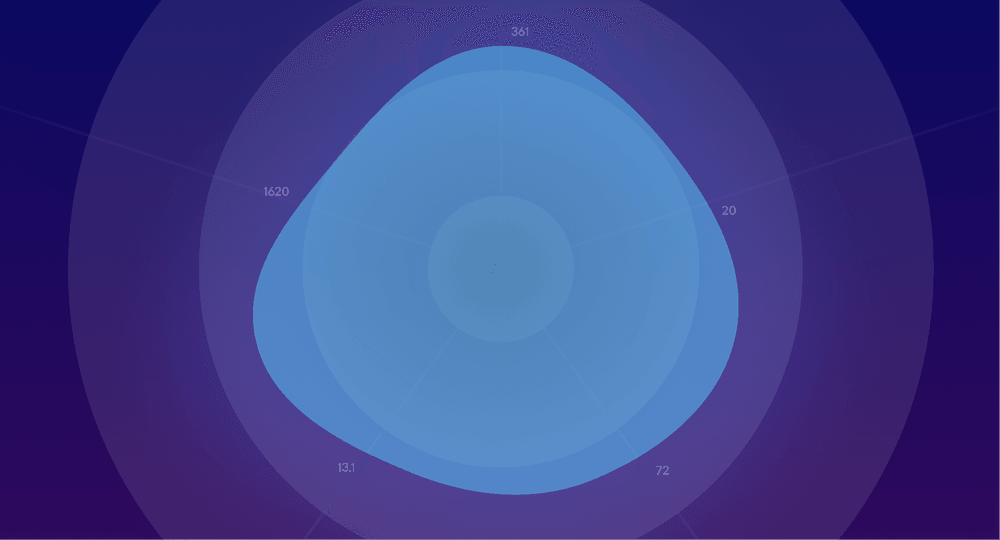

- Benchmark comparisons (p50, p75, p90)

What’s next for surveys?

Well, some things we’ll keep under wraps for now (we have What’s new in Swarmia coming up, which you can sign up for here), but what I will share are some improvements that we’ve been exploring:

- Contextual (trigger-based) surveys

- Lightweight pulse surveys

- Quick, automatic insights and AI comment summary

- Facilitating teams to take action based on the survey results

- Breakdowns for role, tenure, and location in the results

Wrapping up (sort of)

That’s the thing about product discovery — it never really ends. We’re still learning how customers use surveys every week.

From that first problem statement of “engineering teams need to understand developer sentiment” to thousands of survey responses helping teams actually improve, it all started with these simple product discovery practices.

The process isn’t always pretty. It’s not linear. But it works. And that’s what matters.

Subscribe to our newsletter

Get the latest product updates and #goodreads delivered to your inbox once a month.

More content from Swarmia