More than surveys: Capturing real-time data about developer experience

When you’re working on improving developer experience, one of the most challenging parts of the job is to prove that the work you’re doing is the right work, and that it’s having a real impact.

Developer experience surveys help here a bit, but the data you get is lagging, and often impacted by recency bias, sampling bias, and the squeaky wheel. It’s not that survey data isn’t useful (far from it, that stuff is gold) but it can be really dicey to set goals around survey results. It needs to be one part of a broader solution for identifying useful work and evaluating its impact.

Introducing User Experience Objectives

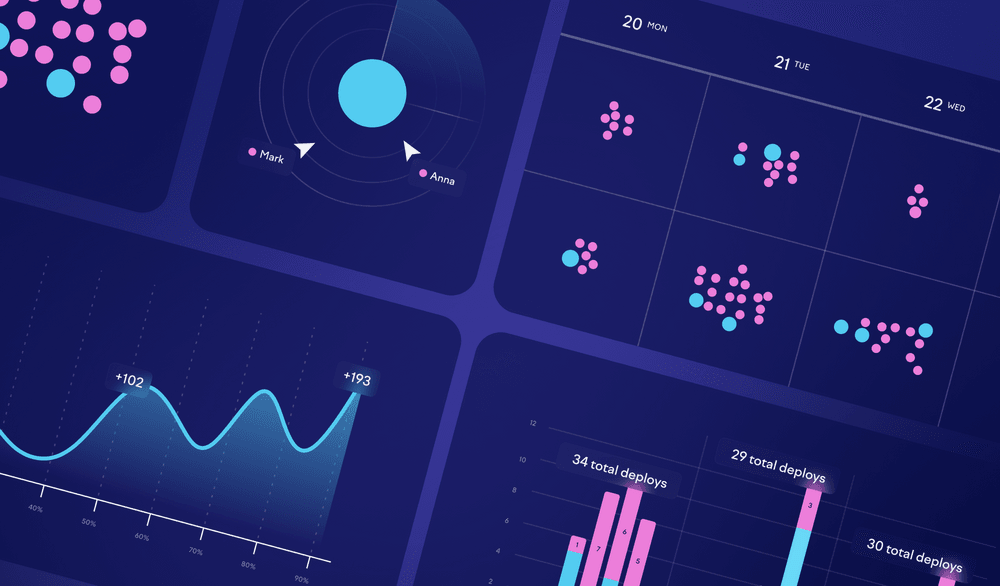

So how can you get real-time insight into whether the work you’re doing is making a difference? One of the most useful ways I’ve found is to quantify how often developers are having a “bad day,” as defined by User Experience Objectives (UXOs) that are set for each developer-facing tool from git to VSCode to internal tools and systems.

Let’s say we can agree on a few things:

- A developer should never (p99.9-ish) wait more than 60 seconds for

git pull. - Saving a file in an editor should not block interaction for more than two seconds @ p80 and 5 seconds @ p90.

- A developer should receive pass/fail results on their change within 10 minutes @ p80 and 20 minutes @ p90.

These are great examples of UXOs. Indeed, if we talk to a couple-dozen software engineers within a software development organization, we can probably come up with a lot of statements like this — some that are being attained today, and others that highlight opportunities to make things better for your engineers.

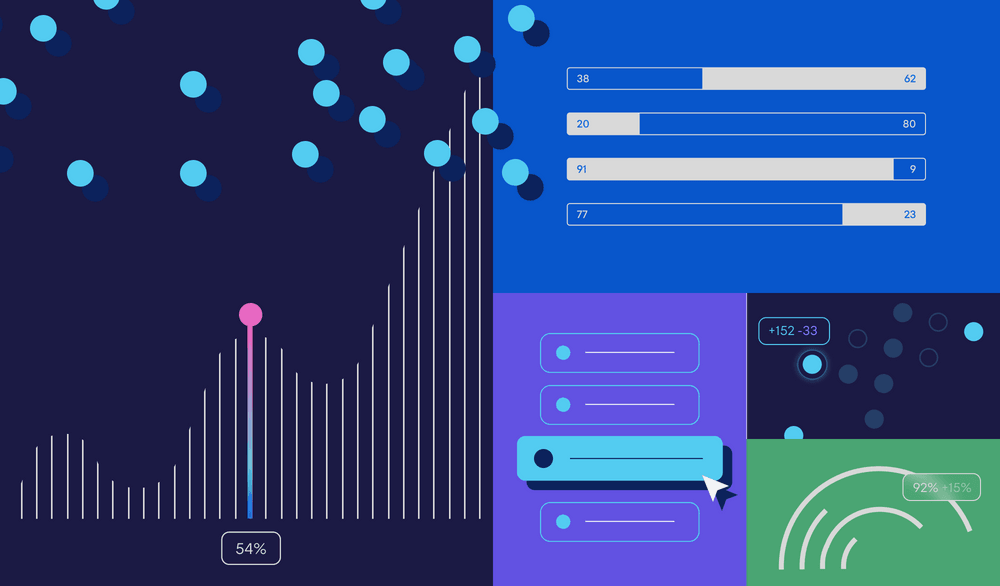

UXOs are great on their own for setting goals around particular experiences, but they become even more powerful when you look at how often they’re being achieved in aggregate: we can say that a developer is having a “bad day” if n or more of these objectives are violated in a given day. From there, we can look at what percentage of the engineering population is having a bad day on any given day, and work to reduce it.

Sometimes, these objectives exist to hold the line on a tenuous system; sometimes, they exist to highlight where there’s work to be done. Either way, they do something that few other developer experience metrics can: they provide near-real-time insight into the pain that your product engineers are experiencing, and near-real-time insight into whether your efforts are making a dent in that pain.

It becomes more realistic to set quarterly goals around improvements, and more possible to record a win for developer experience without needing an extremely specific and proven plan up front — you can be more exploratory and innovative when you focus on the bad outcome you’re trying to prevent, rather than running a long project focused on a single, pre-determined system. You can also change the blend and the weight of each underlying UXO in the aggregate “bad day” metric, on a cadence that makes sense. (Let this be a reminder that there is no “done” in developer experience unless you stop writing code altogether).

When it comes to setting UXOs, I definitely encourage using percentile-based metrics whenever you can. For improvement-focused metrics, p80 seems to be a particularly useful place to slice the data in many cases: you want to include outliers, but you’re willing to accept that there will be extreme outliers that probably aren’t worth improving. On the other hand, certain metrics may lend themselves to p90, p99, or even p99.9 — and this may be especially true for “hold the line” metrics, where your goal is to make sure things don’t get worse.

Making it happen

It’s easy to make statements about how well internal tools should work. Actually implementing a metric like this in your org is going to depend on … a whole lot of things.

If you’re at the point where these sorts of things feel important, then hopefully you have a established a common way for internal tools to log user-related events, like “Maria just tried to save a file. It was successful, but her editor froze for five seconds.” In addition to the user and the task and the duration of the task, you can send other generic metadata (such as branch name or environment info), or even task-specific metadata (like the file that was being saved).

Hopefully you also have a way to turn these logs into queryable data, so you can start to ask questions like “How many engineers waited more than two seconds to save a file?” or “What is the p80 engineer’s experience of saving a file?” or “Are certain teams more affected by file saving wait time than others?”

You may also want to consider emitting these metrics to something like Honeycomb, where you can slice and dice and explore them in a variety of dimensions, letting you spot anomalies relatively quickly.

Pitfalls to avoid

UXOs shouldn’t be confused with Service Level Objectives (SLOs): UXO breaches probably aren’t a drop-everything event. You might think of them as a working agreement with the software engineering teams your internal tools support, a statement of the behavior those teams can expect from internal tools given current funding and competing priorities.

Beware that the data you collect can also be used for evil, because it creates a central record of, well, how developers are using their time. Most of this information probably existed before in many disparate systems, but when it’s centralized, it can be tempting to some people to use the data offensively, for example to seek to identify underperformers.

For this reason, you might choose to anonymize the data before making it queryable, but know that this choice will definitely deprive you of a useful troubleshooting and storytelling tool.

Beyond engineering

It’s easy to imagine how you might apply this approach to experiences that engineers have that are outside the scope of the engineering organization. For example, the procurement process can be so onerous in some organizations that engineers choose a “build” decision when a “buy” decision was actually more appropriate.

If it seems like a lot of delivery friction is outside your control, you can expand your use of UXOs to highlight other delivery bottlenecks, from onerous procurement processes to time-intensive security reviews. Granted, these cases may be harder to collect data about in an automated way, but UXOs can be a great conversation starter for highlighting other parts of the software engineering process that are impeding efficient delivery.

A new currency

UXOs are a reminder that, sometimes, you need to create a new currency to capture the value created by developer experience improvements. UXOs help you focus your efforts on the improvements that matter, while still allowing exploration and innovation — they keep you connected with the lived experience of the software engineers using the tools and processes required to get their job done.

UXOs certainly aren’t the be-all, end-all metric for developer experience: no metric will ever be that. But, again: your developer ecosystem is a product whether you think it is or not. Anyone who owns a product needs to deeply understand what it’s like to use the product, and needs to seek to improve the product for its users. UXOs are a powerful way to do just that.

Subscribe to our newsletter

Get the latest product updates and #goodreads delivered to your inbox once a month.